Issue #119

Read about infrastructure and programming topics and news every week

Nemoclaw

Yesterday, Nvidia announced a version of NemoClaw to run OpenClaw with increased security and privacy: https://www.nvidia.com/en-gb/ai/nemoclaw/.

There is a long list of very important companies already building on it: https://nvidianews.nvidia.com/news/ai-agents.

There is a page with more technical information about nemoclaw: https://build.nvidia.com/nemoclaw.

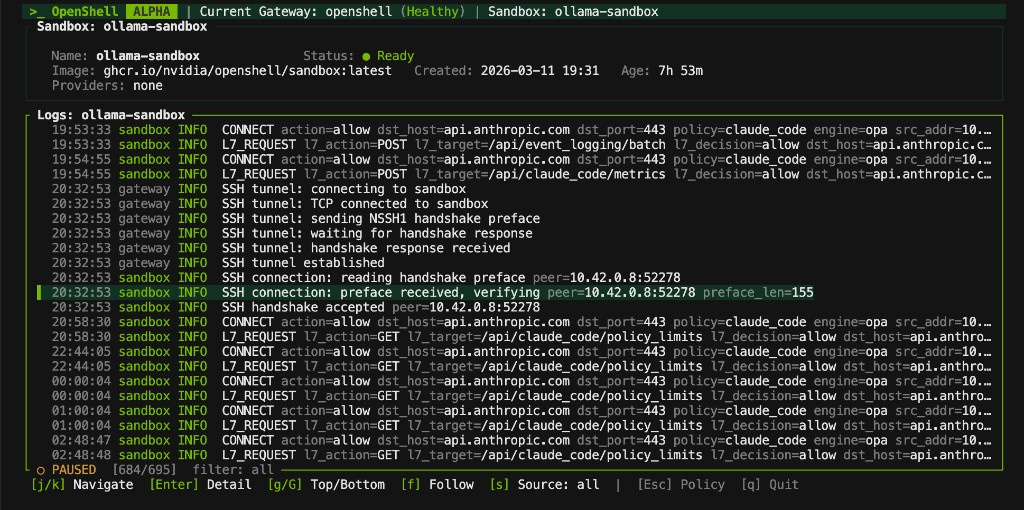

Basically, Nvidia Open Shell, which has already been running other agents, can now run OpenClaw too.

https://github.com/NVIDIA/OpenShell

OpenShell vs OpenAI and Anthropic

OpenAI and Anthropic give the model and SDKs, in other words, the developer tools. With OpenShell, Nvidia gives the model a prison cell, a gatekeeper, and a tool cabinet with policy locks.

NemoClaw likely has an edge when you care about:

local or private execution

hard egress controls

filesystem confinement

operator-controlled inference routing

auditable policy as config

enterprise or regulated environments

OpenAI and Anthropic currently look stronger for:

developer ergonomics

broader hosted tool integrations

faster path to production for typical SaaS agents

multi-agent orchestration frameworks

larger surrounding ecosystem and examples

NemoClaw is interesting because it is attacking a different layer of the stack. Most agent frameworks answer:

How does the model use tools?

NemoClaw answers:

Under what infrastructure policy is the model allowed to exist at all?

That is a meaningful shift. If you are building internal agents with access to code, documents, terminals, or corporate networks, this runtime-level approach is strategically important. If you are building a normal web product and want fast iteration, OpenAI or Anthropic-style hosted agent stacks are probably still the more mature path today. That final judgment is an inference from the current official documentation and the early state of NemoClaw’s launch materials.

Conclusion

For a local dev environment, the simplest way to think about it is that you need one OpenShell gateway container, and inside that gateway NVIDIA runs its embedded K3s control plane, while each agent/session gets its own separate sandbox container managed by the gateway; so if you launch one agent, that is effectively two containers total from your point of view — the gateway container plus one sandbox container — but not a second standalone K3d/K3s container that you manage yourself.

This is alpha software. I reckon that in the future, a Kubernetes cluster will have the gateway and required control software, with one pod/container per sandbox.